You’ve doom-scrolled TikTok for hours. Suddenly, every video matches that one funny clip you liked. Why? Algorithms trap you there on purpose.

A feedback loop in algorithms is a cycle. The algorithm’s output feeds back as new input. This refines results over time. You see it everywhere. Social feeds personalize fast. Streaming picks your next binge. But it cuts both ways. Echo chambers form. Views narrow.

This matters because tech shapes your day. Loops boost engagement. They also risk bias or addiction. In this post, you’ll grasp how these loops tick. You’ll spot types like positive and negative. Real examples from Netflix to AI training follow. Plus pros, cons, and 2026 trends. Ready to see behind the screen?

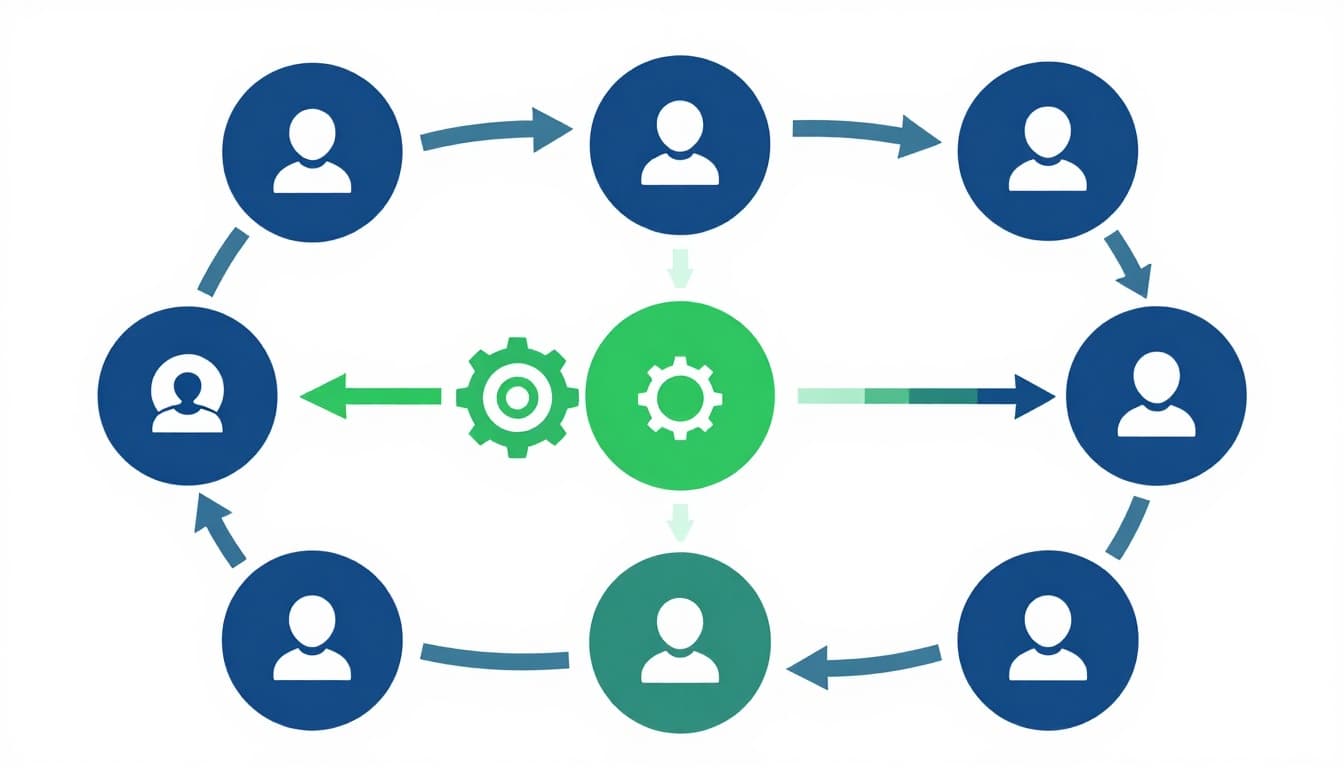

How Feedback Loops Work Step by Step in Algorithms

Feedback loops start simple. User actions feed data in. Algorithms process it. They spit out suggestions. Your reaction loops back. This repeats. Results sharpen with each turn.

Think of a snowball rolling downhill. It picks up speed and size. That’s amplification in action. Or picture backpropagation in machine learning. The model spots errors. It adjusts weights to fix them next time.

Here’s a visual of the core cycle.

The process has five steps. First, input hits from clicks or views. Next, the algorithm crunches patterns. Then, output appears as tailored picks. Feedback comes from your likes or skips. Finally, it cycles again. Smarter picks emerge.

For more on AI learning from mistakes, check Zendesk’s explanation of feedback loops.

The Role of User Actions as Starting Input

Everything begins with you. Likes, watches, or swipes create data. This input kickstarts the loop. No action, no personalization.

Take Netflix. You finish a thriller. That watch logs as data. The system notes your taste. Suddenly, similar shows pop up. Your habits make the loop yours alone.

Small choices snowball. A quick like shifts your entire feed. Algorithms thrive on this fresh input.

Processing and Output: Where the Magic Happens

Algorithms take over here. They scan past data for patterns. Machine learning models predict likes. Output rolls out as recommendations.

Processing uses math under the hood. It weighs your history against millions of users. Output aims to hook you. Then the loop awaits your response.

This step feels instant. But it builds on cycles from before.

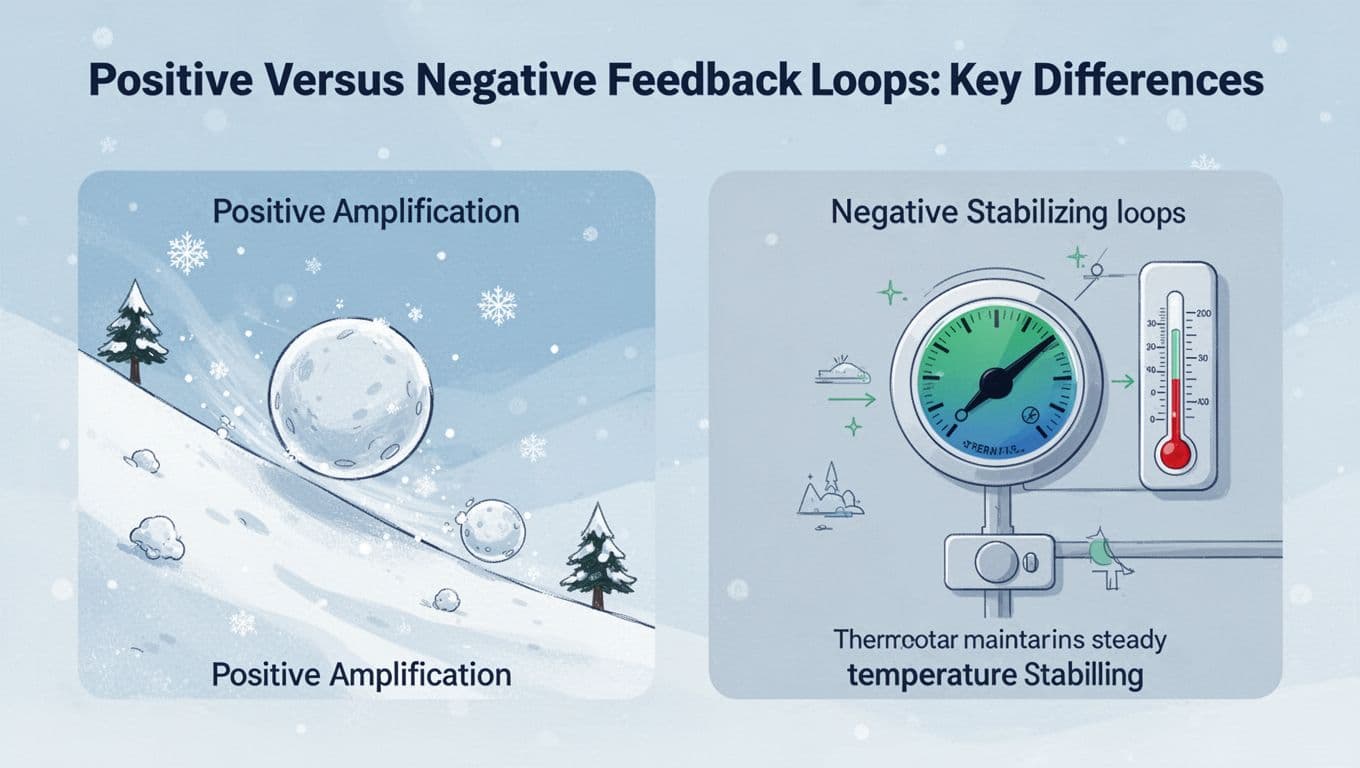

Positive Versus Negative Feedback Loops: Key Differences

Loops split into two camps. Positive ones amplify. Negative ones stabilize. Each shapes algorithms differently.

Positive loops grow changes. They boost hot content fast. Negative loops correct drifts. They keep predictions on track.

| Type | Effect | Example Analogy | Strength |

|---|---|---|---|

| Positive | Amplifies differences | Snowball downhill | Quick personalization |

| Negative | Reduces differences | Thermostat control | Reliable accuracy |

Positive suits viral hits. But extremes can form. Negative ensures balance. Yet it slows bold shifts.

See this contrast visually.

For a deeper dive on how these loops stabilize or run away, read this LinuxCode article.

When Positive Loops Snowball into Hits or Extremes

Positive loops feed success. More views mean more pushes. A video goes viral. Or your feed echoes one view.

Social media loves this. Engagement spikes. But watch out. Narrow tastes dominate. Stars emerge. Diversity fades.

Negative Loops Keep Things Steady and Accurate

Negative loops fight drift. Errors trigger fixes. Predictions improve steadily.

In AI training, this shines. Wrong guesses adjust the model. Game AIs master chess this way. Safety stays high.

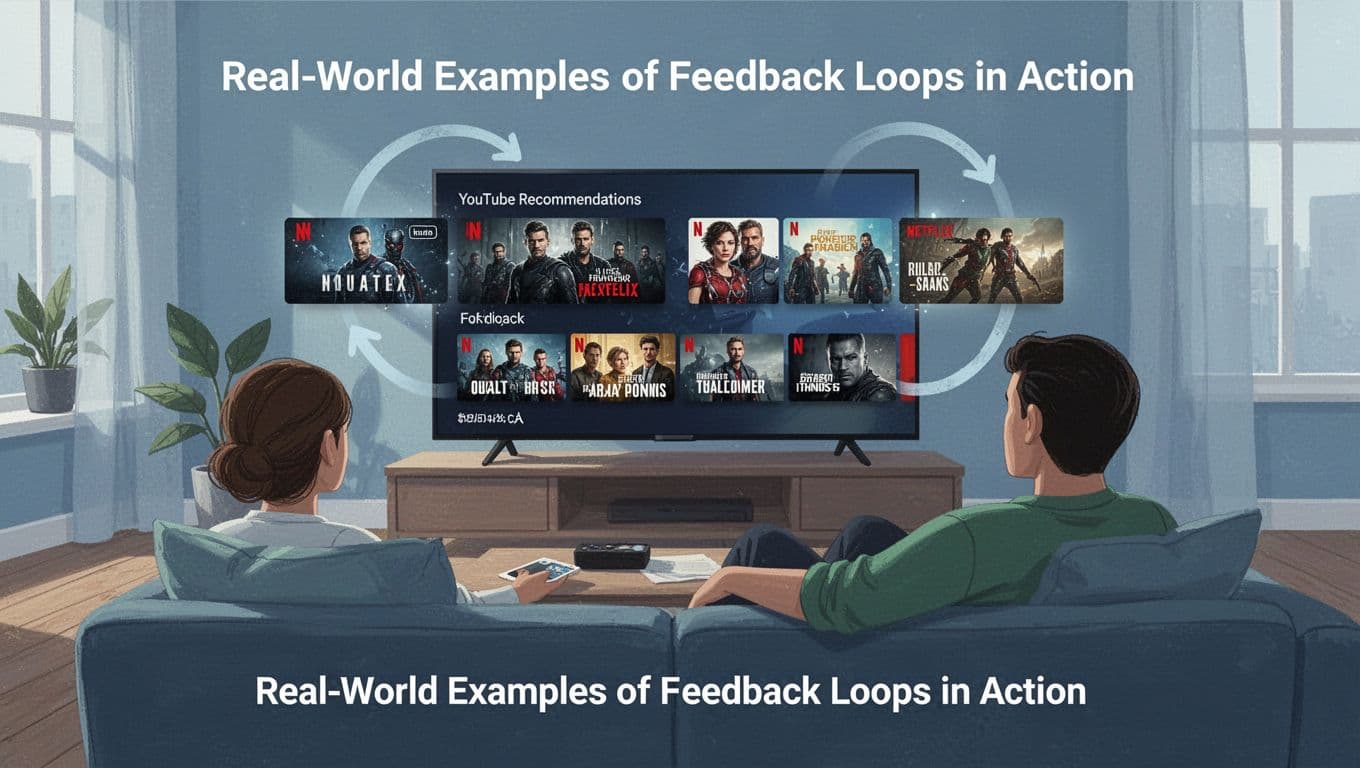

Real-World Examples of Feedback Loops in Action

Loops power apps you use daily. They personalize ruthlessly. Binge a genre? Expect more.

Picture settling in for Netflix. One show hooks you. Boom, your queue fills with matches. Loops at work.

Netflix details their approach in this research on recommender feedback loops.

Streaming Services Like Netflix and YouTube

Watch history feeds the beast. Algorithms match patterns. You skip rom-coms? Thrillers flood in.

Loops trap you in bubbles. Great for binges. Risky for variety.

Social Media Feeds on TikTok and X

Like a dance video? More appear. Swipes signal taste. Feeds narrow fast.

TikTok excels here. Initial tests hook users. Loops refine endlessly.

Behind the Scenes in Machine Learning Models

Training uses negative loops. Predictions err. Feedback tweaks. AlphaGo beat humans this way.

Cycles build skill. Errors shrink over time.

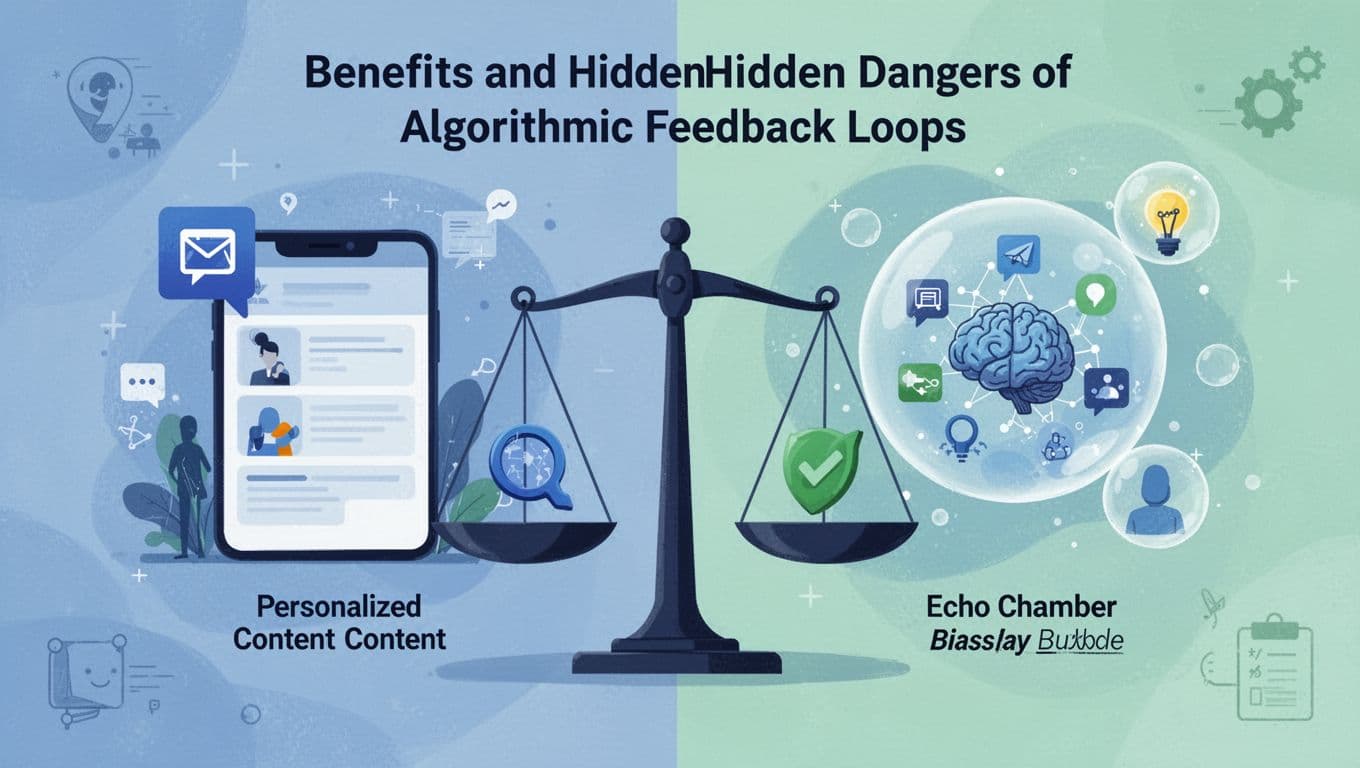

Benefits and Hidden Dangers of Algorithmic Feedback Loops

Loops deliver wins. Content fits you perfectly. Platforms keep you longer. Learning speeds up.

But traps lurk. Echo chambers isolate. Bias from bad data grows. Extreme posts addict.

- Benefits: Tailored feeds save time. Engagement soars. AI improves quick.

- Dangers: Views polarize. Biases amplify. Habits turn addictive.

Awareness breaks cycles. Mix your inputs. For echo chamber risks, see this analysis on AI amplification.

What’s Next for Feedback Loops in 2026 and Beyond

Firms tackle bias now. Diverse data fights amplification. Human checks enter pipelines.

Studies show loops spread flaws. Mitigation mixes socio-technical fixes. AI firms test balanced inputs.

By 2026, expect smarter controls. Users gain tools to tweak feeds. Change habits. Seek variety. Stay ahead.

Optimism rules. Tech evolves with care. For bias fixes, check AIMultiple’s 2026 guide.

Feedback loops define your digital world. They cycle your inputs into sharper outputs. Positive ones excite. Negative ones steady. Apps like Netflix and TikTok show them live. Benefits hook you. Dangers narrow views.

Know this, and you control more. Audit your feeds. Like diverse posts. Skip the rut. Share your loop stories below. Subscribe for AI tips. What’s trapping your scroll?