You apply for a loan. The bank says no. No reason given. Or your social media feed shows the same old views. Algorithms decide these things behind the scenes. Algorithm transparency means companies open up those rules. They share how computers pick winners and losers.

People need this openness. It builds trust. It spots bias before it hurts folks. Without it, secret code can block jobs or loans unfairly. This post breaks it down. You’ll learn what makes up algorithm transparency. See why it boosts fairness. Check real examples and hurdles. Finally, look at new rules shaping the future.

Ready to see inside the black box?

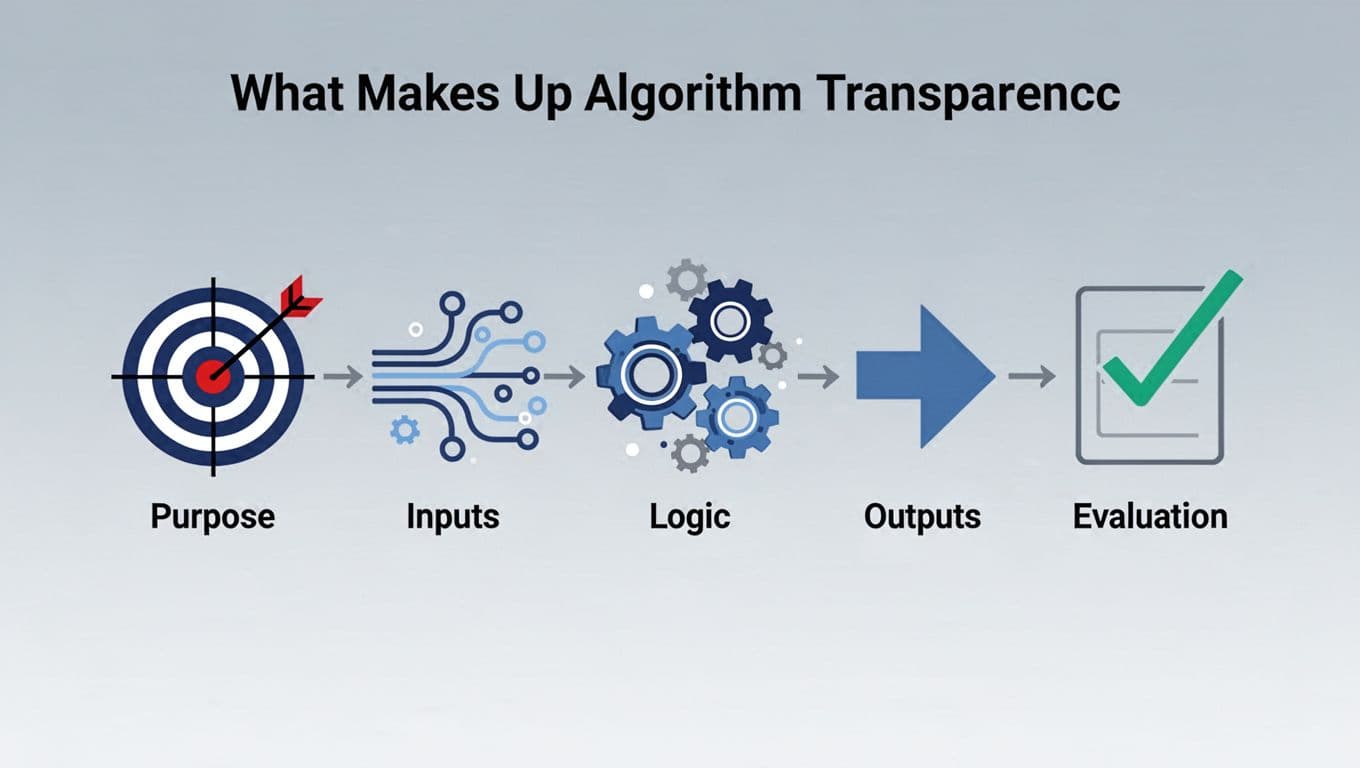

What Makes Up Algorithm Transparency

Algorithms act like recipe books for computers. They guide decisions on jobs, ads, or news. Most stay secret. Transparency reveals the full recipe. It covers five key parts: purpose, inputs, structure and logic, outputs, and evaluation.

Each part matters. People check them to spot issues. Ever wonder what data shapes your search results? Transparency lets you ask.

Purpose and Inputs: The Starting Point

Purpose sets the goal. It answers what the algorithm does. For example, it might rank job candidates by skills. Clear purpose avoids misuse, like using a hiring tool for loans.

Inputs feed the system. These include data like resumes or user clicks. Quality counts. Bad data leads to bad results, or “garbage in, garbage out.” A hiring algorithm trained on old resumes might skip women. It learned from past male hires.

Knowing purpose and inputs helps early. Teams fix skewed data. They add diverse sources. As a result, decisions improve.

Logic Outputs and Checks: The Full Picture

Structure and logic show the steps. Think gears turning data into choices. Simple flowcharts explain it. Code stays hidden sometimes. But overviews build trust.

Outputs deliver results. Not just “yes” or “no.” Explanations follow, like “low score due to short work history.” Users understand then.

Evaluation tests it all. Metrics check accuracy and bias. Audits run regular scans. For instance, the 80/20 rule flags issues. If one group succeeds at under 80% of the top rate, dig deeper.

Together, these parts create accountability. Check TechTarget’s definition for more on how they fit.

Why Algorithm Transparency Changes Everything

Secret algorithms cost lives and money. Bias hits hard. Studies show $4.4 billion in losses from incidents. Health care sees 94% gender bias in advice. Loans approve whites 8.5% more than Blacks with same finances.

Transparency fixes this. It uncovers problems. Society gains fairness. Businesses build trust. Users fight bad calls.

How would you feel if a hidden algo cost you a job?

Boosting Fairness for Society

Bias hides in justice, hiring, and feeds. Tools give Black defendants false positives twice as often. Transparency reveals it. Diverse data and checks level the field.

Society wins big. Minorities get equal shots. Democracy strengthens when news algorithms explain choices. For example, Wikipedia covers algorithmic transparency and its role in rights.

Open systems protect everyone. They ensure real chances, not coded luck.

Wins for Companies and Everyday Users

Businesses avoid scandals. Trust draws customers. Innovation flows when teams share logic. No more surprise lawsuits.

Users see why. Challenge loan denials with facts. Opt out of biased feeds. In hiring, 72% of firms faced risks last year. Transparency cuts that.

Personal wins add up. You control your data. Companies with strategies fix 80% of issues. Everyone benefits.

Real Examples and Roadblocks Ahead

Transparency works in spots. Failures teach lessons. Google shared search details after audits. They use model cards now.

Opaque tools hurt. Secret hiring software blocks candidates. Social ranks stay hidden.

Challenges slow progress. Code gets complex. Privacy laws clash. Trade secrets protect cash cows.

Hope rises with fixes.

Success Stories of Open Algorithms

Google’s model cards list limits and data. They started in 2020. Now, firms follow. Check Google’s Model Card Toolkit for details.

IBM pushes trustworthy AI. They share logic and tests. These steps cut bias. Lawsuits drop.

Openness builds better tools.

Common Barriers Holding It Back

Complexity stumps even experts. Privacy fears data leaks. Companies guard secrets for profit.

Tech limits explain “why” fully. Still, partial shares help. Push past hurdles.

New Rules and the Path Forward

Rules ramp up in 2026. EU AI Act hits August. High-risk tools like hiring need details. Transparency labels required.

US FTC issued AI policy March 11. It applies consumer laws to black boxes. States act too. California demands training data summaries January 1. Colorado targets bias in loans by June.

Initiatives grow. See FTC’s AI statement. Global talks push best practices.

Support it. Demand audits. Fair AI awaits.

Algorithms shape life. Transparency ensures fairness. It spots bias early. Examples like Eightfold’s lawsuit show risks. Rules fix gaps.

Demand openness from tech firms. Audit your tools. A fairer future starts now.

What step will you take? Share below.